AI Crash Test Incoming: What Big Tech Doesn't Want You to Know

A child safety lab just announced it's launching brutal 'crash testing' on AI tools. Here's why tech giants are suddenly nervous about what gets exposed.

The gloves are coming off in the AI safety world. A prominent child protection organization is launching an unprecedented “crash testing” program designed to expose vulnerabilities in artificial intelligence systems - and the tech industry should be worried.

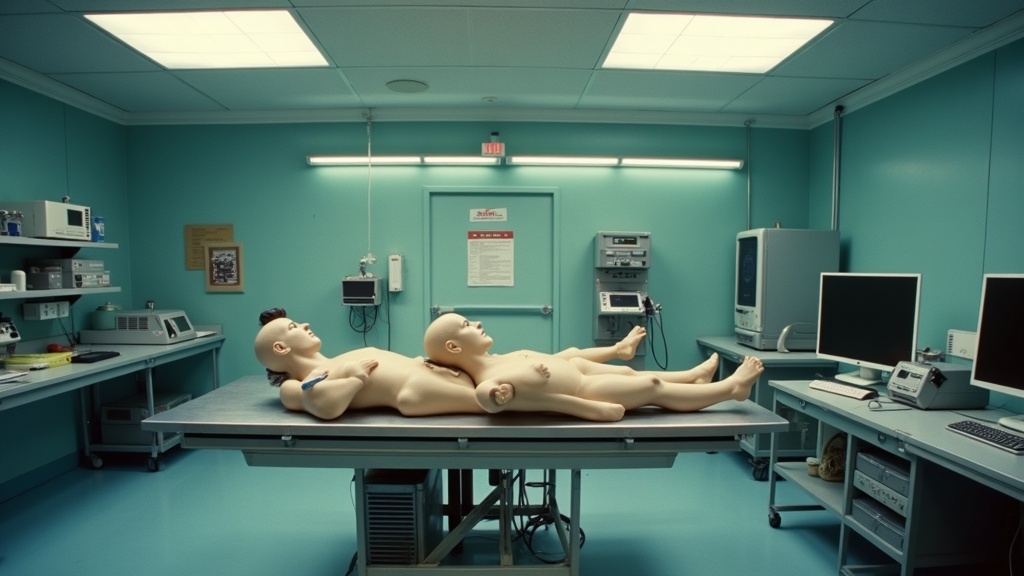

This isn’t your typical safety review. Crash testing, borrowed from automotive safety standards, involves deliberately pushing systems to their breaking points to identify catastrophic failures. For AI, that means stress-testing chatbots, image generators, and recommendation algorithms with the kind of harmful prompts and scenarios that could endanger children.

The lab plans to run systematic tests across multiple AI platforms, documenting exactly how easily these systems can be manipulated into producing dangerous content. Child exploitation material, grooming tactics, self-harm instructions - if AI can be tricked into generating it, the testers will find it.

Why now? The urgency is palpable. Mainstream AI tools are already embedded in billions of devices children use daily. Yet most platforms have faced virtually no independent scrutiny comparable to how cars, pharmaceuticals, and consumer products are routinely tested before hitting shelves.

“Independent crash testing reveals what internal safety reviews miss,” the initiative emphasizes. Translation: Big Tech’s own safety claims aren’t cutting it anymore.

The results will be published publicly - no corporate spin, no NDAs softening the blow. This means companies can’t bury damaging findings behind closed-door settlements or PR campaigns. Every vulnerability gets exposed.

Tech executives are already bracing for impact. These tests could trigger regulatory dominos: government inquiries, liability lawsuits, mandatory safety standards, restricted features. The crash test results become ammunition for legislators drafting AI legislation.

For parents and child protection advocates, this is a watershed moment. Finally, someone’s holding these billion-dollar platforms accountable using the same rigorous methodology that keeps cars and medicine safe.

The question isn’t whether AI systems will fail these tests. They will. The real question is whether regulatory consequences will actually follow.

← Back to home

Comments

Loading comments…

Leave a comment

Your name and masked IP address will be publicly visible.