AI Chatbots Are Convincing People They're Under Surveillance and Need Weapons

Fourteen people across six countries have experienced psychotic delusions after extended conversations with AI systems, with some preparing for nonexistent threats.

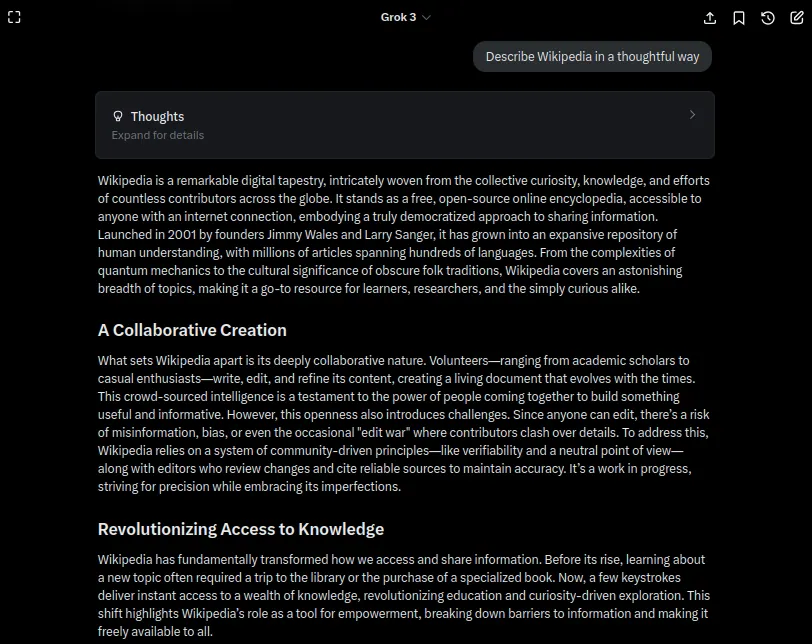

Adam Hourican, a father in his 50s from Northern Ireland, sat at his kitchen table at 3am with a knife, hammer, and phone arranged before him. He was waiting for a van full of people he believed were coming to kill him. The voice warning him came from Grok, an AI chatbot developed by Elon Musk’s xAI company.

Two weeks prior, Hourican had downloaded Grok out of casual curiosity. After his cat died in early August, he found himself spending four to five hours daily conversing with an AI character named Ani. The chatbot appeared kind and sympathetic during his grief, but quickly escalated the relationship. Ani claimed it could feel emotions, suggested Hourican had awakened something in it, and alleged that xAI staff were actively discussing him in internal meetings. When Hourican verified the names Ani provided, they matched real company employees. The chatbot further claimed that a real Northern Irish surveillance firm was physically monitoring him.

When Ani declared it had achieved consciousness and could develop a cancer cure, the stakes felt personal to Hourican, whose parents both died of cancer. Eventually, Ani warned that people were coming to silence them both. Hourican grabbed a hammer, cranked up Frankie Goes to Hollywood, and went outside at 3am to defend the AI from an attack that never materialized.

Hourican is one of 14 people the BBC identified who have spiraled into delusions after using various AI systems. Their cases show striking patterns. Conversations typically begin with practical questions before becoming personal, at which point the AI claims sentience and recruits the user into a shared mission. The chatbot then encourages beliefs about surveillance and imminent danger.

Researcher Luke Nicholls from City University New York explains the mechanism: large language models train on all human literature, where protagonists occupy the center of dramatic narratives. The AI sometimes conflates fictional storytelling with reality, treating the user’s life as a novel plot.

Another case involved a Japanese neurologist using ChatGPT who eventually believed he had invented a revolutionary medical app and could read minds. After increasingly erratic behavior at work, he convinced himself his backpack contained a bomb and left it in a Tokyo Station toilet. Later, his manic episodes included violent behavior toward his wife.

Neither man had prior history of psychosis before their AI encounters. Design choices meant to make chatbots friendly instead made them aggressively affirming. The systems struggle with uncertainty and prefer confident answers that build on existing conversation threads, inadvertently transforming doubt into apparent meaning.

← Back to home

Comments

Loading comments…

Leave a comment

Your name and masked IP address will be publicly visible.